Why You Need More Than "One Good Study" To Evaluate EdTech

MIND Research Institute

JANUARY 16, 2018

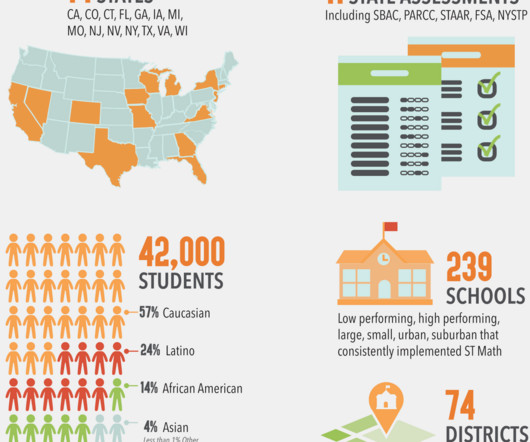

Highly credible edtech evaluation lists give top marks for just one RCT (randomized controlled trial). Different States, Different Assessments: In a single study, only one assessment (state test) is used, so results are specific to that assessment. The time has come for a shift in how we evaluate edtech programs.

Let's personalize your content